Last refreshed: May 15, 2026

Two partnership and policy stories from the Anthropic desk that haven’t been covered here yet, both with meaningful implications for how Claude reaches enterprise users and how governments are thinking about AI security risk.

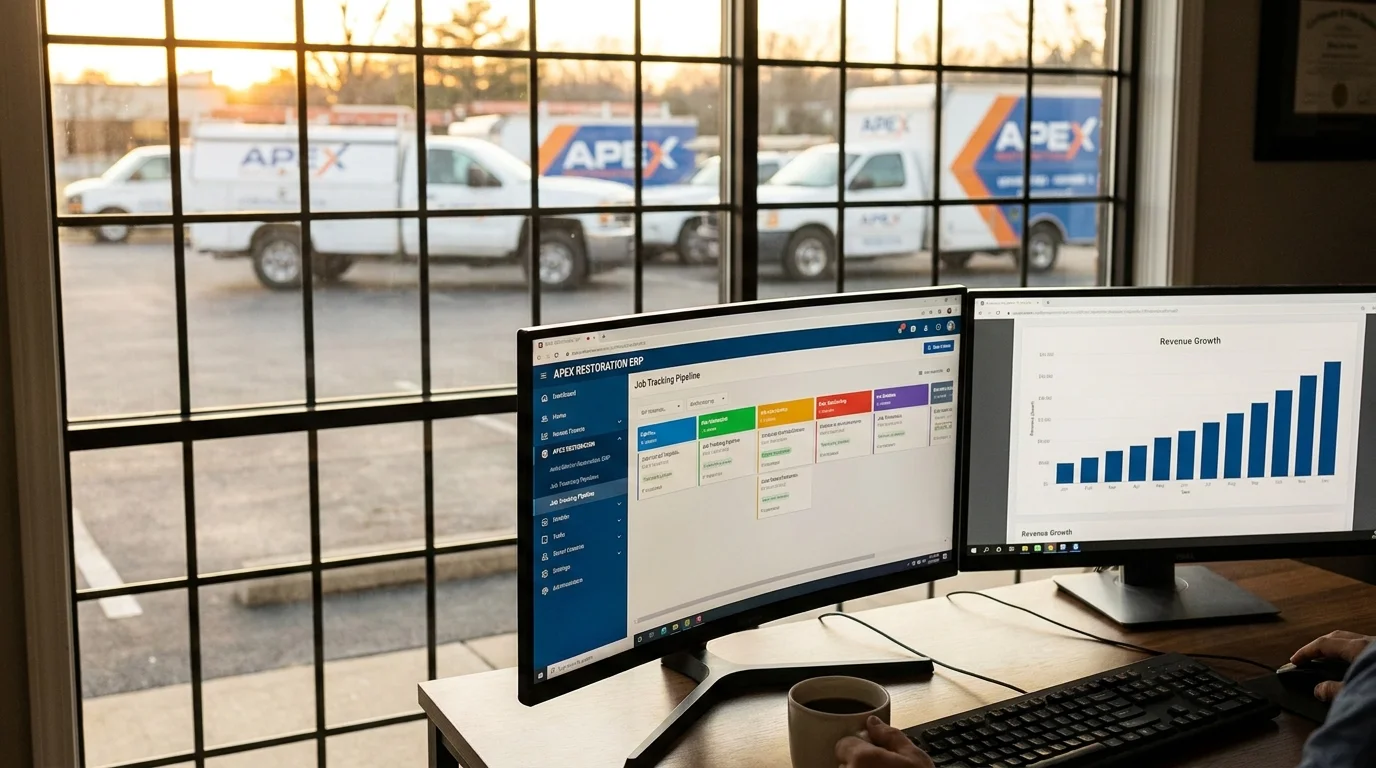

Part 1: Snowflake’s $200M Partnership — 12,600 Enterprise Customers as Distribution

In December 2025, Anthropic and Snowflake announced a multi-year, $200M partnership making Claude models available to Snowflake’s 12,600+ enterprise customers across all three major clouds. The partnership makes Claude the AI layer inside Snowflake’s data platform for a client base concentrated in financial services, healthcare, and life sciences — the three regulated verticals where Anthropic has been most deliberately building.

The specific products:

- Snowflake Intelligence — powered by Claude Sonnet 4.6, providing conversational data analysis directly within the Snowflake environment

- Snowflake Cortex AI Functions — supporting Claude Opus 4.5 and newer models for structured AI functions across the Snowflake data warehouse

Source: anthropic.com/news/snowflake-anthropic-expanded-partnership

The number that matters most here isn’t $200M — it’s 12,600. That’s the customer count Snowflake brings as a distribution channel. These are enterprise organizations that have already made a procurement decision to standardize on Snowflake for data infrastructure. Embedding Claude inside that infrastructure means Claude becomes the AI system those organizations reach for when they need to query, analyze, or reason about their own data — without requiring a separate AI platform procurement decision.

This is the distribution model that makes enterprise AI market share move: not direct sales to 12,600 enterprises, but a single partnership that makes Claude the default AI layer inside infrastructure those enterprises already use. Snowflake customers in financial services can run Claude-powered compliance analysis on their own Snowflake data. Healthcare organizations can run Claude-powered analysis on patient data that stays within their existing Snowflake security perimeter.

The regulated-industry focus is deliberate. Financial services, healthcare, and life sciences are the verticals where data governance requirements are strictest — and where the ability to run AI on your own data, within your own security perimeter, without moving that data to an external AI service, is the deciding factor in procurement. Snowflake’s existing data residency and compliance infrastructure makes that possible in a way that a direct Anthropic API call often doesn’t.

Part 2: India’s RBI Warning + The Glasswing Gap

In late April 2026, India’s Finance Ministry and Reserve Bank of India convened meetings on cybersecurity preparedness specifically referencing Claude Mythos risk. Finance Minister Nirmala Sitharaman met with bank executives at North Block to advise pre-emptive hardening. The RBI began consulting with global regulators. CERT-In, major telcos, and fintechs ran parallel risk assessments.

Source: Business Standard, April 27, 2026 — business-standard.com

The structural issue underneath the news: Project Glasswing — Anthropic’s defensive cybersecurity consortium that provides early access to Mythos for defensive purposes — named the following founding partners: AWS, Apple, Cisco, CrowdStrike, Google, JPMorgan Chase, Microsoft, and Nvidia. Zero Indian firms. India is Anthropic’s second-largest market globally. Its government is actively warning its financial sector about Mythos risk. And no Indian organization is in the defender consortium that gets early access to the model and the defensive research that goes with it.

This is not a small gap. The Mozilla Firefox result (271 vulnerabilities in a month, including 20-year-old bugs) demonstrated what Mythos can do in a real production codebase. If that capability is available to offensive actors — or if non-partner organizations don’t have the same early visibility into what Mythos can find — organizations outside the Glasswing partner network are in a different risk position than those inside it.

The Tension This Creates

Anthropic’s distribution into India is accelerating. Cognizant deployed Claude across 350,000 employees. Razorpay built its Agent Studio on the Claude Agent SDK and wired UPI rails through Claude as an authorized payment agent with NPCI. Air India, CRED, and Swiggy are named enterprise customers. India is Anthropic’s second-largest market.

Meanwhile: India’s government is warning its financial sector about the offensive potential of Claude Mythos, no Indian firm is in the Glasswing defender consortium, and INR-denominated pricing (with 18% GST) makes the effective Pro subscription cost approximately ₹2,240/month for Indian users — a meaningful friction point for the market Anthropic is describing as its #2 global market.

The distribution is running faster than the partnership infrastructure is opening. Either Project Glasswing expands to include Indian financial institutions and cybersecurity organizations, or India builds its own parallel defensive capacity, or the gap becomes a structural political fact in Anthropic’s India relationship.

India’s government isn’t opposed to Claude. It’s actively adopting it across both public and private sector. The RBI/Finance Ministry meetings were framed as hardening preparation, not restriction. But the asymmetry — India as top-2 market, zero Indian firms in the defender consortium — is conspicuous enough that it will eventually require a response.

Frequently Asked Questions

What does the Snowflake-Anthropic partnership include?

A multi-year, $200M agreement announced December 2025, making Claude models available to Snowflake’s 12,600+ enterprise customers. Snowflake Intelligence launched powered by Claude Sonnet 4.6 for conversational data analysis (model at time of partnership announcement; verify current model with Snowflake). Snowflake Cortex AI Functions supports Opus 4.5 and newer models. The focus is regulated industries: financial services, healthcare, and life sciences.

What is Project Glasswing?

Project Glasswing is Anthropic’s invitation-only defensive cybersecurity program that provides early access to Claude Mythos Preview for organizations working to defend critical infrastructure. Named founding partners include AWS, Apple, Cisco, CrowdStrike, Google, JPMorgan Chase, Microsoft, and Nvidia. Access is invitation-only with no self-serve sign-up. No Indian organizations are currently named as Glasswing partners.

Why is India’s government warning about Claude Mythos if India is Anthropic’s second-largest market?

The Indian government’s meetings (RBI, Finance Ministry, CERT-In) were framed as defensive preparation, not restriction. The concern is that Mythos-tier capability could be used offensively against Indian financial infrastructure — a legitimate risk that applies regardless of Anthropic’s commercial relationship with India. The tension is that organizations inside Project Glasswing get early access to defensive research while India’s financial sector, with no Glasswing presence, does not.