There’s a moment every serious Claude user hits eventually.

You’re mid-session. You’ve built something — a workflow, a content pipeline, a research thread — and you’re deep in it. Then the model goes quiet. Or returns something strange. Or just stops.

You didn’t break anything. You ran out of room.

What Actually Happened (The Token Wall)

Every AI conversation has a context window — a fixed amount of memory the model can hold at once. Think of it like a whiteboard. As a session gets longer, the whiteboard fills up: your messages, the model’s responses, tool outputs, task lists, code snippets. All of it takes space.

When you get close to the limit, the model doesn’t always fail gracefully. Sometimes it just can’t fit the new request alongside all the history. It tries. It might start a response and stop. It might return something vague. It looks broken. It isn’t — it’s full.

Here’s the part most people miss: the smarter the model, the more verbose its outputs. Claude Opus thinks deeply and writes extensively. That costs tokens. So in a nearly-full context, Opus might actually have less usable runway than you’d expect — because every output it generates is large.

The Haiku Trick (And What It Reveals)

When you’re stuck at the context limit, the instinct is to try a smarter model. That’s usually wrong.

The right move is to try a smaller one.

Haiku — Claude’s lightest, fastest model — can squeeze through a gap that Sonnet and Opus can’t fit through. It’s lean enough to do one small thing: update a task list, summarize where things stand, trigger a compaction. That small action unlocks the whole session again.

This isn’t a bug. It’s a feature, once you understand it.

The lesson: it’s not always about raw intelligence. It’s about fit. The right tool for the moment isn’t the most powerful one — it’s the one that can actually execute given the constraints you’re operating in.

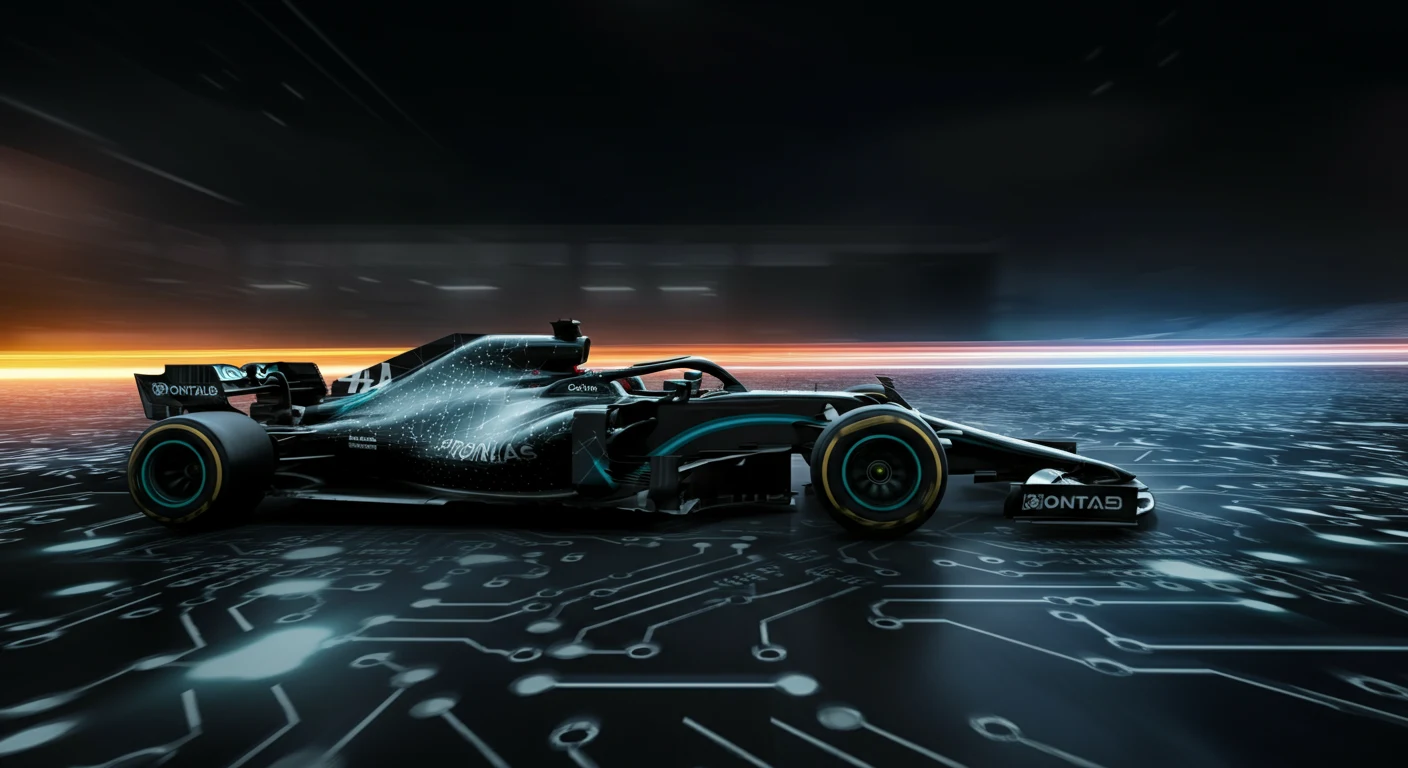

The Formula One Analogy

Formula One teams spend hundreds of millions building the fastest cars on earth. But the car doesn’t win races by itself. The driver decides when to pit, which tires to run, when to push and when to conserve. Two drivers in identical cars produce different results — sometimes dramatically different.

Working with AI at a high level is the same.

Most people are handed a powerful car and told to drive. They go fast for a while, then hit a wall and don’t know why. They try pressing harder on the accelerator. That doesn’t help.

The experienced operator reads the context. They know when the session is getting long and starts pruning. They know when to swap models. They know when to compact, when to start fresh, when to hand off a task to a subagent in isolation. They understand the system — not just the tool.

That understanding only comes from hours in the seat.

What Agents Teach Us About Humans

Here’s the inversion most people miss.

We spend a lot of time asking: how do we make AI more like humans? But there’s a more interesting question: what can humans learn from how agents operate?

Agents succeed when they have clear, bounded context (not a mile-long thread of everything), a defined task (not “figure it out”), honest signals about capacity (not pushing through when overloaded), and the right model for the moment (not always the heaviest one).

Agents fail when context is polluted, tasks are ambiguous, or they try to do too much in a single pass.

Sound familiar? That’s also exactly why humans fail on complex work.

The Haiku moment is a perfect human analogy. When you’re overwhelmed and stuck, the answer usually isn’t to think harder. It’s to do the smallest possible thing that creates forward momentum. Clear one item. Make one decision. Unlock one next step.

That’s not dumbing it down. That’s operating intelligently within constraints.

The Hybrid Isn’t Human + AI

The real hybrid isn’t “a human who uses AI tools.”

It’s a human who has internalized how agents think — who naturally breaks work into discrete tasks, knows their own context limits (we call it cognitive load, but it’s the same thing), swaps in the right resource for the right job, and is honest about when they’re at capacity instead of producing garbage at 11 PM.

And it goes the other direction too. Agents get sharper when humans encode years of pattern recognition into them — through prompts, through memory systems, through skills built from real operational experience.

Your best agent workflows aren’t built from documentation. They’re built from the moment you got stuck at the token wall at midnight and figured out that Haiku could fit through the gap.

That knowledge doesn’t come from a tutorial. It comes from being in the car.

The Nuances You Only See From Inside

Here’s what I keep coming back to: the most valuable insights from working with AI at a high level are almost impossible to communicate without having lived them.

You can read about context windows. You can understand the concept intellectually. But the feel of a session getting heavy — that instinct that tells you to compact now, before you hit the wall — that only comes from experience.

Same with knowing when a task is too big for one conversation. When a subagent in isolation will outperform a single long thread. When the model’s “thinking” is just pattern-matching on noise in the context.

These are driver skills. And like any driver skill, they’re earned in the seat.

The people who get the most out of this technology aren’t necessarily the ones with the most technical knowledge. They’re the ones who’ve put in the hours. Who’ve gotten stuck, figured it out, and filed it away.

The car is available to everyone.

The driver makes the difference.

{ “@context”: “https://schema.org”, “@type”: “Article”, “headline”: “The Driver and the Car: What AI Agents Teach Us About Being Human”, “description”: “Every serious Claude user hits the token wall eventually. Here’s what it teaches you — about AI, about agents, and about how humans perform under constrai”, “datePublished”: “2026-04-03”, “dateModified”: “2026-04-03”, “author”: { “@type”: “Person”, “name”: “Will Tygart”, “url”: “https://tygartmedia.com/about” }, “publisher”: { “@type”: “Organization”, “name”: “Tygart Media”, “url”: “https://tygartmedia.com”, “logo”: { “@type”: “ImageObject”, “url”: “https://tygartmedia.com/wp-content/uploads/tygart-media-logo.png” } }, “mainEntityOfPage”: { “@type”: “WebPage”, “@id”: “https://tygartmedia.com/the-driver-and-the-car-what-ai-agents-teach-us-about-being-human/” } }

Leave a Reply