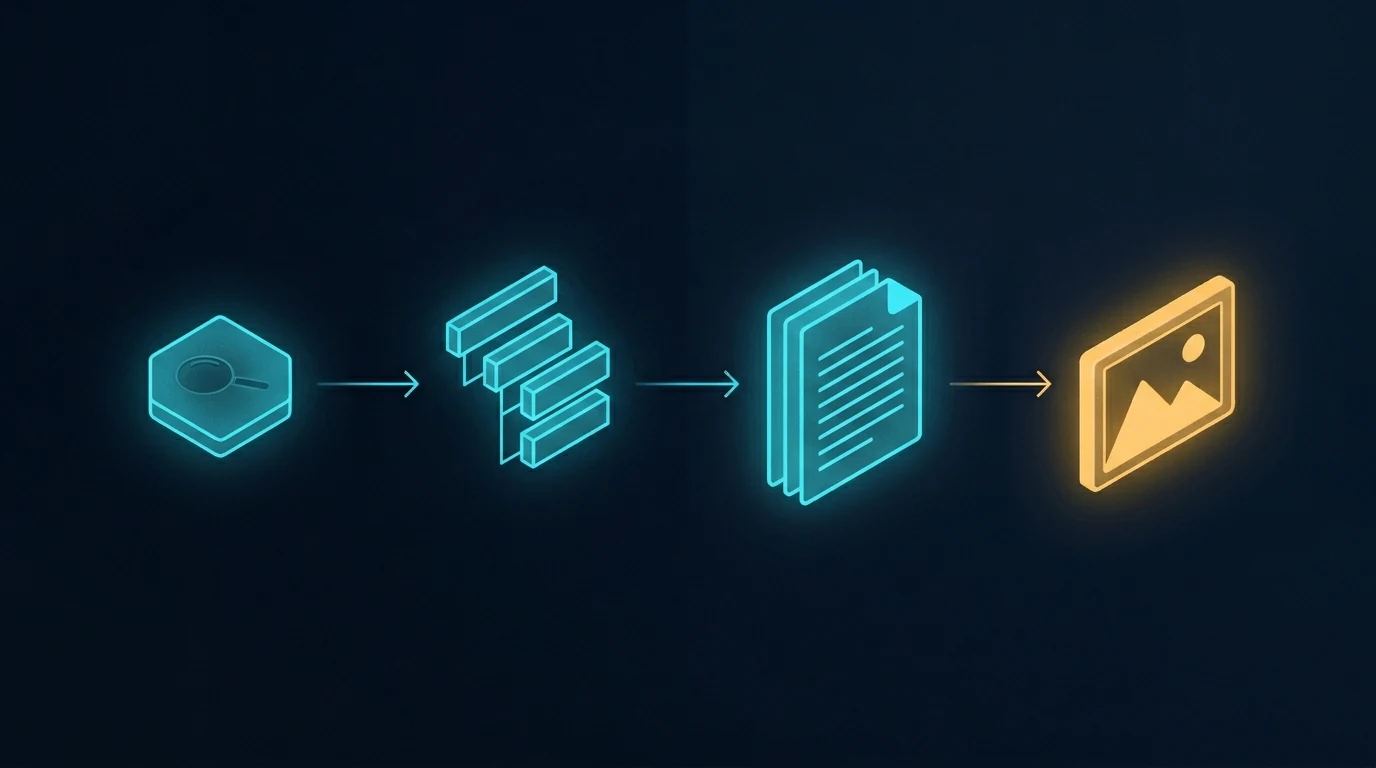

This is what I’m building for myself, and what I’m building for the people I work with. It’s a long essay because the shift it describes is large and the through-line matters. The ten images below aren’t decoration — they’re the spine. Each one is a moment in a life that doesn’t fully exist yet but is closer than most people realize.

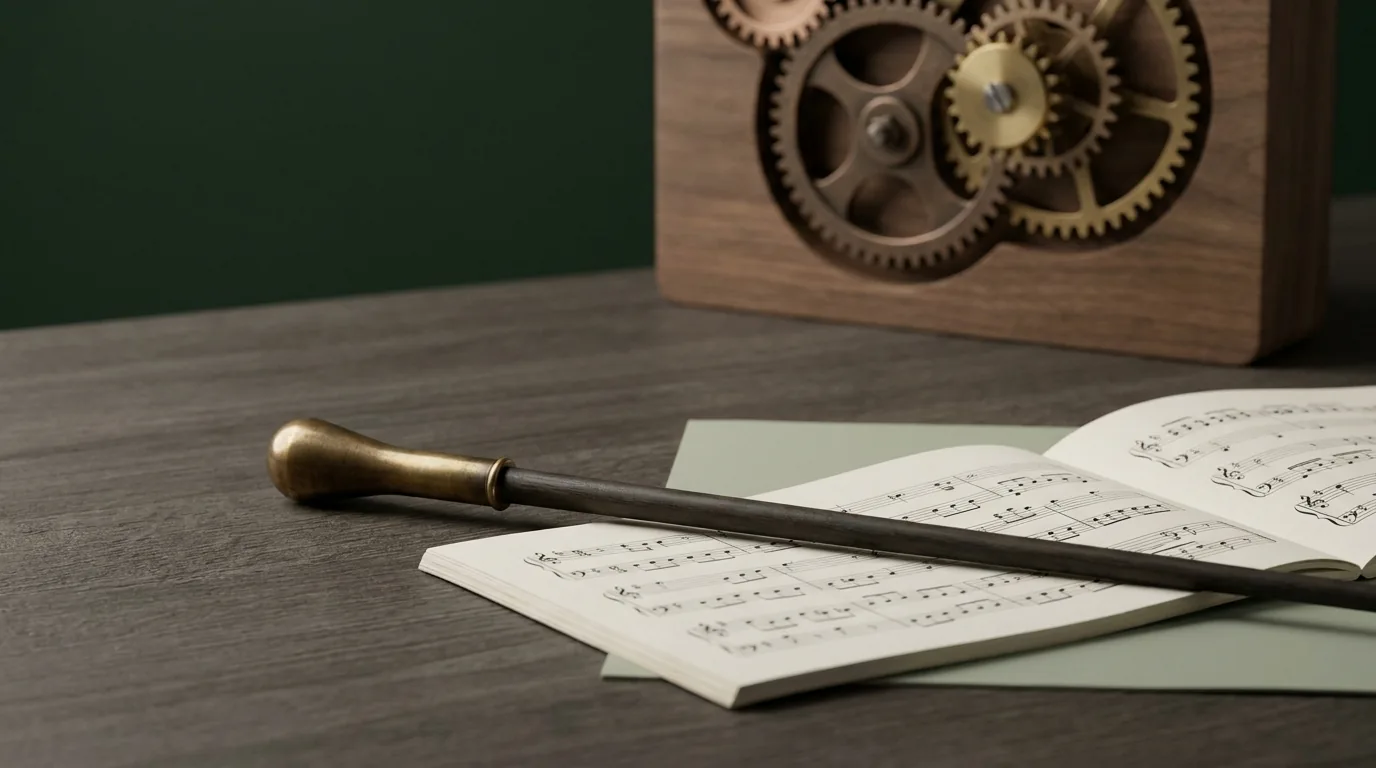

I want to start where the technology starts, which is not in a factory.

The man in the image above is finishing a wearable by hand. It’s an AR ring — leather and brushed aluminum, the band sized to his client’s wrist, the materials chosen because his client cares about how the thing feels at 6 AM on the day she has to present to a board. Behind him are leather rolls and fabric swatches that wouldn’t look out of place in a coachbuilder’s atelier. To his right are the kind of objects you’d find in a hardware prototyping lab — chassis teardowns, a development tablet, AR glasses on a stand. The corkboard above the bench has automotive interior sketches and material studies pinned next to each other.

What that workshop is, in operational terms, is a luxury goods atelier and a hardware lab collapsed into one room. The collapse is the thing. The line between “this is bespoke craft” and “this is consumer electronics” has been melting for a decade, and the workshop above is what it looks like once that line is gone.

I’m building for the people who will live on the right side of that collapse. The people who don’t want a phone — they want an instrument that fits the way they think. The people who have stopped trusting mass-produced anything and started looking for the small workshop, the verified maker, the device tuned to them specifically. That’s the Curation Class. They’ve existed in clothing for a hundred years and in cars for sixty. They’re now showing up in technology, and the technology is the part of the story I have to build.

This essay is about what their daily life looks like when the ecosystem actually works. Then it’s about why I think this is where things go from here, and what I’m doing about it.

Introduction to the instrument

Meet the user. She’s the one who commissioned the work in the hero image. She’s an architect — the corkboard behind her is a hint, the mood board with fashion sketches and house renderings tells you something about her aesthetic taste. The coffee cup has a small leather wrap and a logo I won’t try to read; the flower in the vase is past its bloom but she hasn’t replaced it yet because she likes it that way.

She’s just opened the ecosystem the artisan was finishing. The hologram floating above the ring spells out what she’s getting: “Vibe Curation, Concierge Cred Network, Curated Intelligence.” The version number is v1.4, which tells you the device has been iterated. This isn’t a Kickstarter prototype. This is a maintained system that updates the way her car updates and her phone updates, except it updates to fit her specifically rather than to fit the median user.

The phrase “Personalized Ecosystem” deserves to be said carefully because it gets thrown around by everyone selling anything. What’s on her desk is different. It’s not a feature flag set to her preferences. It’s not a recommendation algorithm tuned to her purchase history. It’s an ecosystem in the literal sense — an interconnected set of devices, services, vendors, and contexts that have been wired together around her cognition, her body, her schedule, her taste, and the people she trusts. The wearable is the access token. The ecosystem is everything the token unlocks.

The reason this matters is not that the technology is impressive. It’s that the unit of value is changing. For a generation, the value was in the device. For the next generation, the value is in the connections between the devices and the person who wears them. You don’t buy the ring. You buy your way into the ecosystem that the ring represents. The ring is just the part you can touch.

This is what I’m building toward. Not the device. The connections.

The day starts with a small ritual

The first time the ecosystem touches her day, it’s a coffee. She’s at a café — bright, marble-countered, the kind of place that does third-wave coffee and serves it in a small ceramic cup. The barista is named Maria. The hologram above her ring is showing the order before Maria has had to ask: oat latte, 120°F (which is a specific temperature most people don’t know to ask for), Ethiopian Yirgacheffe roast.

The detail that matters is the parenthetical: “Maria (verified).”

This is the Concierge Cred Network. Maria isn’t just a barista. She’s been verified by the ecosystem — pulled up by name because she’s the one who makes the coffee the way the subject likes it. If Maria’s not working today, the ecosystem might suggest a different café entirely rather than route the order to a barista the system doesn’t trust to nail the temperature. The vendor relationship has become specific to the human, not the brand.

I want to name something about this image that the casual viewer might miss. The subject is barely looking at the ring. Her gaze is on Maria. The interaction is human; the technology is in the background doing the work that makes the interaction friction-free. When the ecosystem works, it disappears. It doesn’t ask her to type her order, doesn’t ask her to dig out her phone, doesn’t ask her to remember which roast she likes. It does that work upstream. What she’s left with is a moment of eye contact and a coffee that’s right.

This is, in my experience, the part most technology gets wrong. The goal isn’t to put more interface in front of people. The goal is to remove the interface from places it doesn’t belong. The Curation Class is willing to pay a premium for that subtraction.

The home she designed for herself

Now she’s home. The wall she’s touching is travertine — real stone, the kind with porosity you can feel under your fingertips. The hologram tells you the room is in a “Curated Sanctuary” mode and lists the materials: travertine and a cashmere blend. The room is calm. The light is afternoon. The chair is leather and looks like it’s been broken in for years.

The detail I want to pull forward is the curator field on the hologram: “User_24A. Verified.”

She is the curator. The “Verified” tag isn’t a brand verification. It’s her own. The space was designed by her, for her, and the ecosystem is tracking that fact. The wall, the light temperature, the fragrance the room is currently running, the sound dampening, the chair — all of it is a vibe she composed and the ecosystem is just executing.

This is where the Curation Class diverges most sharply from the mass-luxury class that came before it. The old luxury class hired Robert Mion or Kelly Wearstler to curate for them. They bought the taste of someone whose taste was for sale. The new class makes the curation themselves and uses the ecosystem to remember the choices and reproduce them. The taste isn’t borrowed. It’s authored. The ecosystem is what makes authored taste tractable at the level of a daily-running home.

I’ll be honest about why this matters to me operationally. When I think about what I’m building for my best clients — the ones who are paying for something more than a website or a content pipeline — I’m not building campaigns. I’m building the systems that let them author their own taste and reproduce it at scale. The Notion structure is part of that. The content stack is part of that. The way we wire models and routing and observability is part of that. None of it is technology for its own sake. All of it is the infrastructure of authored taste.

The room above is what that looks like when it’s done.

The work she actually does

The studio above is hers. The building is hers too — she’s an architect, and “The Veda Residences” is the project she’s leading. The hologram shows iteration v9.2, which means this design has been worked through. The physical model on the leather pad is the build she’s referring to when the holographic version isn’t enough.

A few things to notice. The drafting table has a real architect’s set square on it. The materials board has fabric and stone swatches that look like they were pulled from suppliers she trusts. The two colleagues in the back are visible through a glass partition; the studio isn’t a solo operation. It’s a small firm.

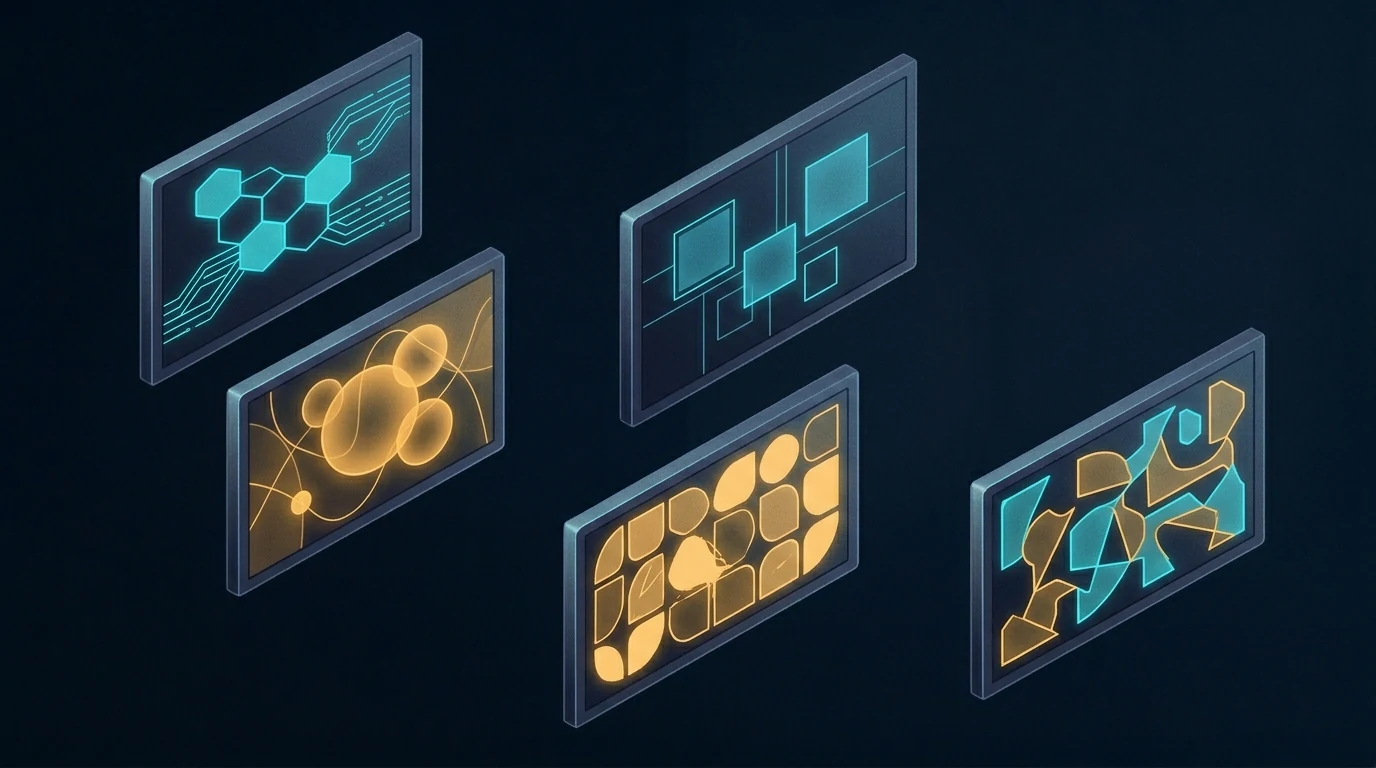

What the ecosystem gives her here isn’t draft generation. It’s not “AI did the design.” The design is hers, plus her team’s. The ecosystem gives her something subtler — the ability to iterate v9.2 against her own internal coherence rules, her own taste profile, her firm’s body of work, the structural and material verifications she requires. She is still making every decision. The ecosystem is making every decision legible and reproducible.

This is the part I think most people get wrong about where AI is going. They think it’s going to do the work. It’s not. It’s going to make the work expressible. The architect above doesn’t need an AI to design her building. She needs an instrument that lets her ask “would this material be coherent with the rest of my catalog?” and get an answer with citations. She needs the ecosystem to be the silent third party that holds her own standards more reliably than she can hold them in her head across a four-month project.

The building she’s designing in this image, by the way, is the one she’ll be standing inside in the last image of this essay. Hold that. We’ll come back to it.

Recovery, the part the ecosystem treats as work

After the work, the recovery. The image above is what wellness looks like when it stops being a separate vertical and becomes a function of the same ecosystem that runs the rest of the day.

The hologram says “Vibe State Recovery (post-design cycle).” That phrase is doing real work. The ecosystem knows she just spent eight hours on iteration v9.2 of the building project. It knows what that does to her body — the cortisol curve, the shoulder tension, the eye strain. It’s prescribing a recovery protocol that’s specific to what she just did. Not a generic massage. Not a generic meditation. A recovery state tuned to a design cycle.

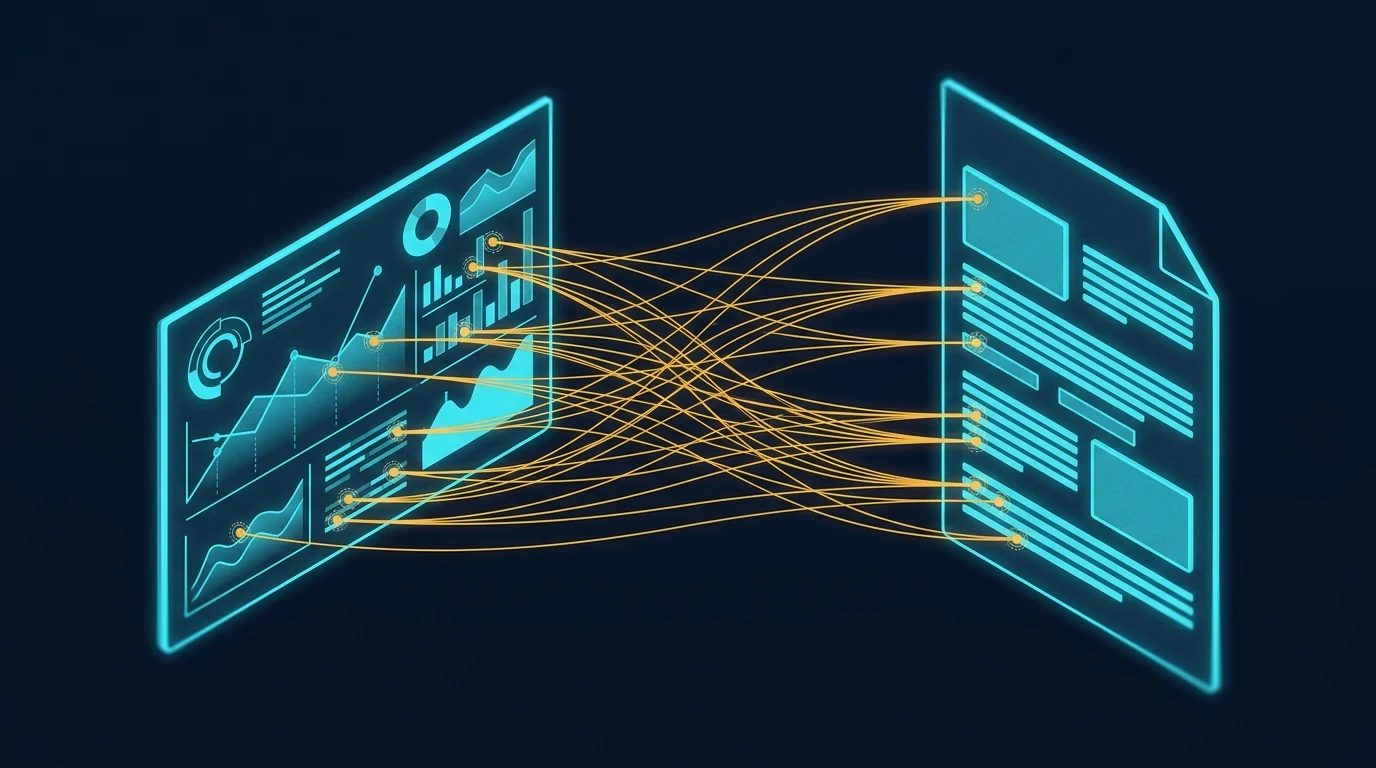

“Second Brain (User_24A): Verified Biometrics” is the connective tissue here. The wellness system isn’t reading her body from scratch. It’s reading her body in the context of everything else the ecosystem knows about her — her schedule, her work, her sleep history, her stress baseline, her medication if any, her preferences for what kinds of intervention she’ll accept. The Second Brain in this image isn’t a metaphor. It’s literally the persistent memory layer that lets every part of the ecosystem behave intelligently with respect to every other part.

If I had to name what I think the single biggest unlock of the next ten years will be, it would be this: persistent personal memory that crosses contexts. Right now your fitness app doesn’t know what your therapist said. Your calendar doesn’t know what your sleep tracker measured. Your travel booking doesn’t know your spouse’s allergy profile. Each of these systems is islanded. The Curation Class will be the first cohort to live in a world where those islands are connected, and the connection will be the persistent personal Second Brain that they own — not a vendor’s database. Theirs.

This is, again, why I do what I do. Not because I want to sell people on “AI wellness.” Because the architectural pattern of a persistent personal Second Brain, owned by the human, is the foundation everything else rides on.

A deeper intervention

The session continues. She’s now holding a more specific tool — a neural stim device that’s been issued to her, the kind of thing that has to be verified for her specifically because applying it wrong would do real damage. The hologram says “Neural Pathway Targeted: Verified.” The ecosystem isn’t just letting her use the device. It’s verifying that the protocol is appropriate for her at this moment.

The phrase “Vedic Regeneration” is doing some cultural work here. I’m not going to oversell it — different people will read different things into it. What I’ll say operationally is that the Curation Class tends to be polyglot about where its wellness traditions come from. They’ll combine cold plunges, somatic therapy, Ayurvedic principles, and neural-feedback hardware in the same week without feeling the contradictions. The ecosystem is what makes that polyglot stance tractable — it can hold the protocols from five different traditions and apply the one that fits the moment.

The reason a verification layer matters is harder. We’re entering an era where people will be doing more sophisticated interventions on their own nervous systems than ever before. Some of those interventions will be safe. Some won’t. Some will work for one person and harm another. The ecosystem above is doing what regulators won’t be able to do for another fifteen years: assuring that a specific intervention is appropriate for a specific person on a specific day. The verification isn’t bureaucratic. It’s the thing that lets her safely run the protocol at all.

I’ll name the discomfort here. There’s a version of this that ends badly — concentration of biometric data, vendor lock-in, dependence on a system that someone else can shut down. That risk is real. The mitigation isn’t to refuse the technology. The mitigation is to own the Second Brain rather than rent it. Which is part of why I’m building the way I’m building. The architecture matters. The architecture is the politics.

The commute as part of the system

She’s in the car now. It’s autonomous — the road is moving but her attention is on the floating dashboard. The destination on the hologram is her own design studio at 11 Rivoli. ETA fourteen minutes.

The phrase that earns its keep is “Flow State Curation.” The car isn’t just transporting her body. The car is preparing her cognition for what’s about to happen at the studio. Audio profile tuned. Cabin temperature optimized. Lighting on a curve that brings her up into focus rather than letting her crash at the end of the recovery session. The fourteen minutes between wellness and work aren’t dead minutes. They’re a transition that the ecosystem is actively shaping.

When I look at this image I think about how much of contemporary life is wasted in transitions. The Curation Class won’t tolerate it. Their time is their most expensive asset, and they’re willing to pay to have transitions be productive rather than evaporated. The autonomous car is part of that. So is the ring. So is the wellness suite. So is the studio. None of them in isolation is interesting. Stitched together they are an enormous economic shift.

The other thing worth naming: the car is bespoke. “Smart cashmere & polished aluminum, verified.” This is not a leased Tesla. It’s a vehicle whose interior materials have been chosen for her, verified by the maker, and integrated into the ecosystem in a way that lets the car participate in the flow state curation rather than fight it. The market for that kind of vehicle barely exists today. It will exist in ten years, and it will be larger than people think.

Collaboration at scale

The studio meeting. Four colleagues, a marble table, a wall of glass onto the city. She’s standing because she’s leading.

The hologram says “Group Alignment 88%.” That’s the part I want to pull forward. The ecosystem isn’t just running her individually — it’s running a measurement of how aligned her team is on the current iteration of the project. Eighty-eight percent is high. Twelve percent is the gap she has to close in the room.

This is where the Curation Class moves from being a personal lifestyle to being an operational advantage. A team that can see its own alignment in real time, that can identify the twelve percent of disagreement and address it directly rather than letting it metastasize through three more meetings — that team will outperform a team that can’t. The ecosystem is doing the work of measurement that used to require an executive coach in the room. Now it’s just there, on the table, visible to everyone.

I want to be careful here. There’s a version of this where the alignment metric becomes a cudgel, where dissent gets flattened by the pressure to push the number up. That’s a failure mode and the ecosystem above can absolutely become it if the culture around it is wrong. The fix isn’t to refuse the measurement. The fix is to make the measurement legible enough that disagreement is preserved as signal rather than erased as noise. The ecosystem can do that. Whether the team uses it that way is a cultural question, not a technological one.

The technology, by itself, is neutral. The culture decides whether it’s surveillance or instrumentation. I’m building for the latter.

The arc closes

This is the image that earns the whole essay.

She’s standing inside the building. The Veda Residences — the project that was iteration v9.2 in the studio scene — is now built. The curved concrete, the fluted glass, the composite timber that the hologram in that earlier scene specified, all of it has gone from model to reality. She designed the room she is now living in. The hologram above her is reporting that the sanctuary is “realized” and that the alignment is at 100%, which is the team-level analog of the personal sanctuary she was tuning at home.

She designed her own world into existence. The ecosystem made the through-line tractable across nine months of design iterations, two construction phases, fifteen vendor relationships, three biometric recovery cycles, a hundred small daily curations, and the original choice — three years earlier — to commission a hand-finished AR ring from a maker who works with leather and aluminum on a single bench.

The Curation Class is not, fundamentally, a class that consumes better products. It’s a class that authors its own life and uses an ecosystem to make the authorship coherent across time. The wearable, the home, the studio, the wellness suite, the car, the team, the building — these are all expressions of one continuous act of authorship. The technology is the substrate. The taste is the act. The realization is the proof.

Why I’m building for this

I started this essay by saying it’s about what I’m building for myself and my clients. I want to close on that more directly.

I am not building generic AI tools. I am not building “content automation.” I am building the operational substrate that lets a person — a founder, an operator, an artist, an architect — author their own coherent system across time and have the system reliably express the authorship. That’s the Notion architecture. That’s the model routing layer. That’s the content pipeline. That’s the persistent memory. None of it is interesting in isolation. All of it is interesting because of what it adds up to.

The person I am building for is the architect above. She doesn’t know me. She might not exist yet. But the infrastructure that makes her life tractable is the infrastructure I am wiring this week, this month, this year. Every client I take on is a step toward making the substrate real. Every article I publish is a way of describing the future I’m trying to bring forward. Every system I document is a piece of the operating manual for the Curation Class.

I think this is the work. I think it’s where the next ten years are. I think the people who get this right will look back at the current era — when AI was being used to mass-produce the same five blog posts and the same five product descriptions — the way the Bauhaus generation looked back at Victorian ornament. They will see the gap between what was being built and what could have been built, and they will name it.

I’m trying to be on the right side of that gap.

The image above — the woman standing inside the building she designed, with a glass of water, watching the city she optimized — is what I’m working toward. Not for her specifically. For the version of that life that becomes available to anyone who decides to author it and has the infrastructure to do so. That’s the Curation Class. That’s the brief I’m operating under. That’s the future I’m building.

It’s already starting. The man in the first image is finishing the ring by hand. The system is being built. The class is forming. The rest is execution.