TL;DR: Ninety-five percent of enterprise Generative AI investments fail to deliver ROI. Gartner projects 40% of agentic AI projects will collapse by 2027. The missing variable isn’t better models — it’s the Expert-in-the-Loop architecture that keeps autonomous systems honest.

The $600 Billion Misfire

Enterprise AI spending has crossed the half-trillion-dollar mark. Yet the return on that investment remains stubbornly low. The number cited most by Deloitte, Capgemini, and McKinsey consulting reports is brutal: 95% of Generative AI pilots never reach production or deliver measurable ROI.

The failure isn’t technological. The models work. GPT-4, Claude, Gemini — they reason, they synthesize, they generate. The failure is architectural. Organizations treat AI as an isolated tool bolted onto existing workflows rather than redesigning the operating model around what autonomous systems actually need: guardrails, governance, and a human who knows when to pull the brake.

From the Task Economy to the Knowledge Economy

The first wave of AI adoption automated individual tasks — summarize this document, draft this email, classify this ticket. That was the Task Economy. It delivered marginal gains.

The shift happening now is toward the Knowledge Economy: orchestrating complex, multi-agent workflows where specialized AI systems reason through multi-step problems, delegate subtasks to smaller models, and execute against real-world APIs. This is the agentic paradigm, and it changes the risk calculus entirely.

When an AI agent autonomously decides to reclassify a patient’s insurance code, reroute a supply chain, or publish content at scale, the blast radius of a hallucination isn’t a bad email — it’s a compliance violation, a financial loss, or a reputational crisis.

The Confidence Gate Architecture

The Expert-in-the-Loop model doesn’t slow AI down. It makes AI trustworthy enough to accelerate. The architecture works through a Confidence Gate — a decision checkpoint where the system evaluates its own certainty before proceeding.

When confidence is high and the domain is well-mapped, the agent executes autonomously. When confidence drops below threshold — ambiguous inputs, novel edge cases, high-stakes decisions — the system routes to a verified human expert who acts as a circuit breaker.

This isn’t human-in-the-loop in the old sense of manual approval queues. The Expert-in-the-Loop is selective, triggered only when the system’s own uncertainty metric warrants it. The result: autonomous velocity with human accountability.

Agentic Context Engineering: The Operating System for Trust

Making this work at scale requires what researchers now call Agentic Context Engineering (ACE). Traditional prompt engineering treats context as static — a system prompt that never changes. ACE treats context as an evolving playbook.

The framework uses three roles operating in concert: a Generator that produces outputs, a Reflector that evaluates those outputs against known constraints, and a Curator that applies incremental updates to the context window. This prevents “context collapse” — the gradual degradation of AI performance as conversations grow longer and context windows fill with noise.

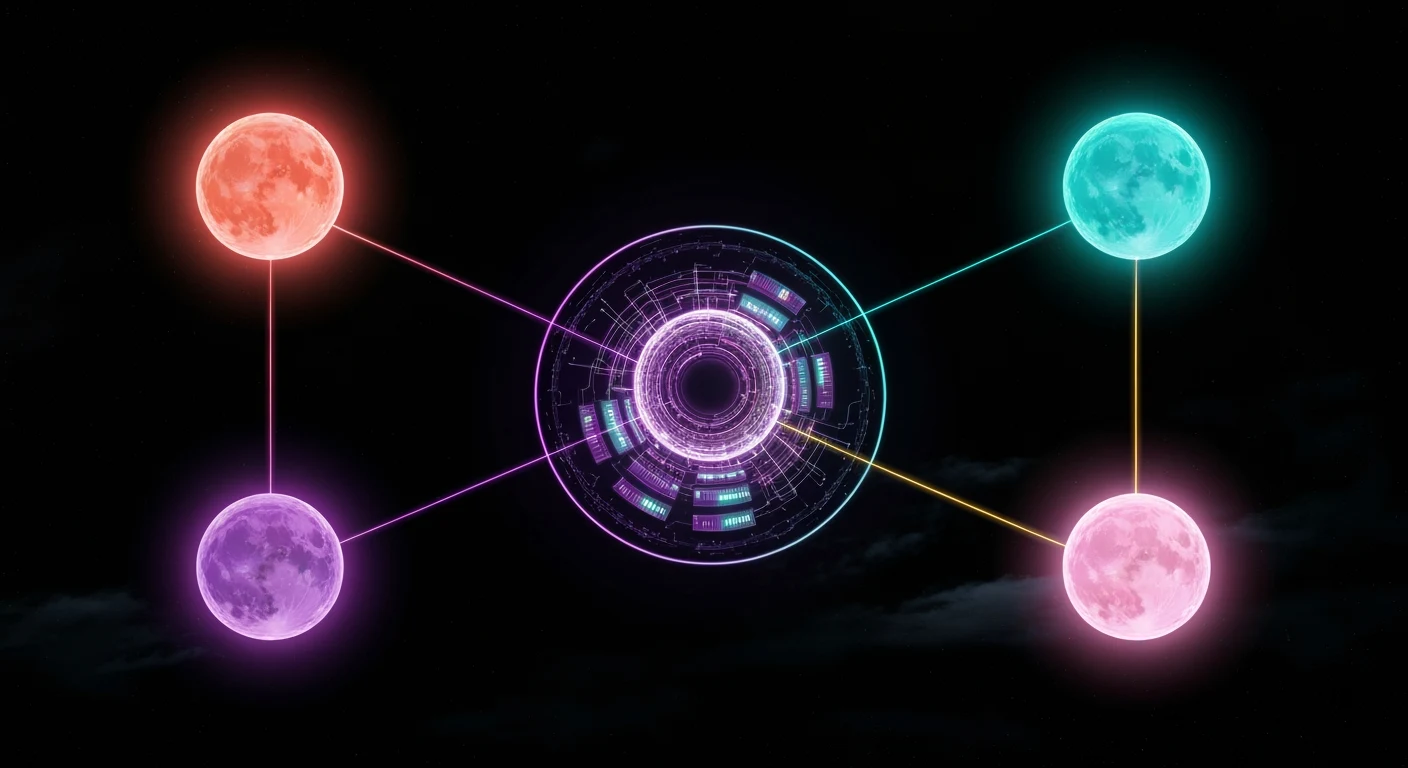

The Orchestrator-Specialist Model

The most effective enterprise deployments in 2026 aren’t running one massive model for everything. They use an Orchestrator-Specialist architecture: a highly capable LLM (Claude Opus, GPT-4) acts as the orchestrator, breaking complex tasks into subtasks and delegating execution to a fleet of domain-specific Small Language Models (SLMs).

The orchestrator handles reasoning and planning. The specialists handle execution — fast, cheap, and within a narrow competency boundary. This architecture reduces cost by 60-80% compared to routing everything through a frontier model while maintaining quality where it matters.

What This Means for Your Business

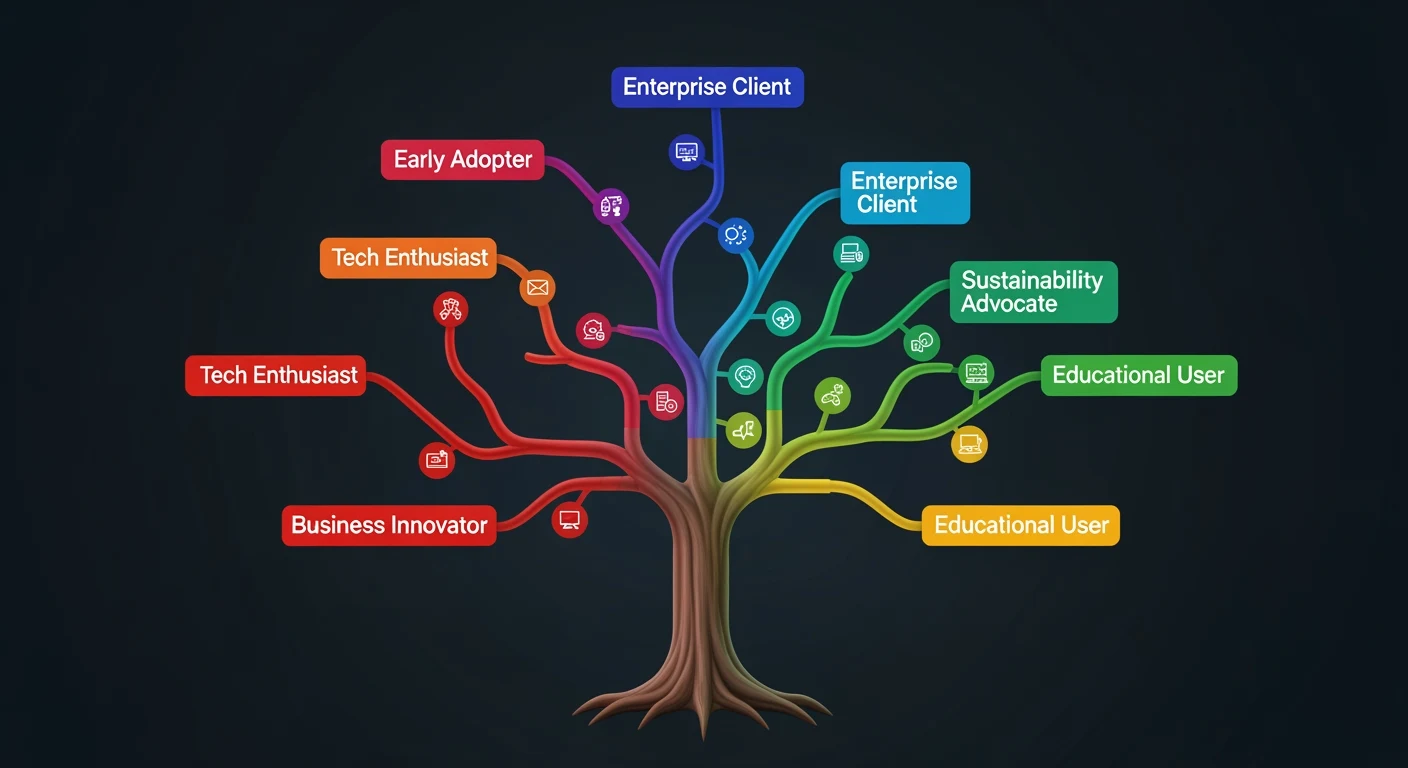

If you’re planning an AI deployment in 2026, here’s the framework that separates the 5% that succeed from the 95% that don’t:

First, audit your decision taxonomy. Map every AI-assisted decision by stakes and reversibility. Low-stakes, reversible decisions (content drafts, data classification) can run fully autonomous. High-stakes, irreversible decisions (financial transactions, medical recommendations, legal compliance) require Expert-in-the-Loop gates.

Second, implement confidence scoring. Every agent output should carry a confidence metric. Build routing logic that escalates low-confidence outputs to domain experts — not managers, not generalists, but people with verified expertise in the specific domain.

Third, design for context persistence. Use ACE principles to maintain living context that evolves with each interaction rather than starting from zero every session. Your AI should get smarter about your business every day, not reset every morning.

The enterprises that win the AI race won’t be the ones with the biggest models. They’ll be the ones with the smartest architectures — systems where machines do what machines do best and humans do what humans do best, orchestrated through governance frameworks that make the whole system trustworthy.

{

“@context”: “https://schema.org”,

“@type”: “Article”,

“headline”: “The Expert-in-the-Loop Imperative: Why 95% of Enterprise AI Fails Without Human Circuit Breakers”,

“description”: “Ninety-five percent of enterprise AI fails to deliver ROI. The missing variable isn’t better models — it’s Expert-in-the-Loop architecture with Conf”,

“datePublished”: “2026-03-30”,

“dateModified”: “2026-04-03”,

“author”: {

“@type”: “Person”,

“name”: “Will Tygart”,

“url”: “https://tygartmedia.com/about”

},

“publisher”: {

“@type”: “Organization”,

“name”: “Tygart Media”,

“url”: “https://tygartmedia.com”,

“logo”: {

“@type”: “ImageObject”,

“url”: “https://tygartmedia.com/wp-content/uploads/tygart-media-logo.png”

}

},

“mainEntityOfPage”: {

“@type”: “WebPage”,

“@id”: “https://tygartmedia.com/the-expert-in-the-loop-imperative-why-95-of-enterprise-ai-fails-without-human-circuit-breakers/”

}

}