Last refreshed: May 15, 2026

The hardest problem in running an AI-native operation is not the AI — it’s the memory. Claude’s context window is large but finite. It resets between sessions. Every conversation starts from zero unless you engineer something that prevents it.

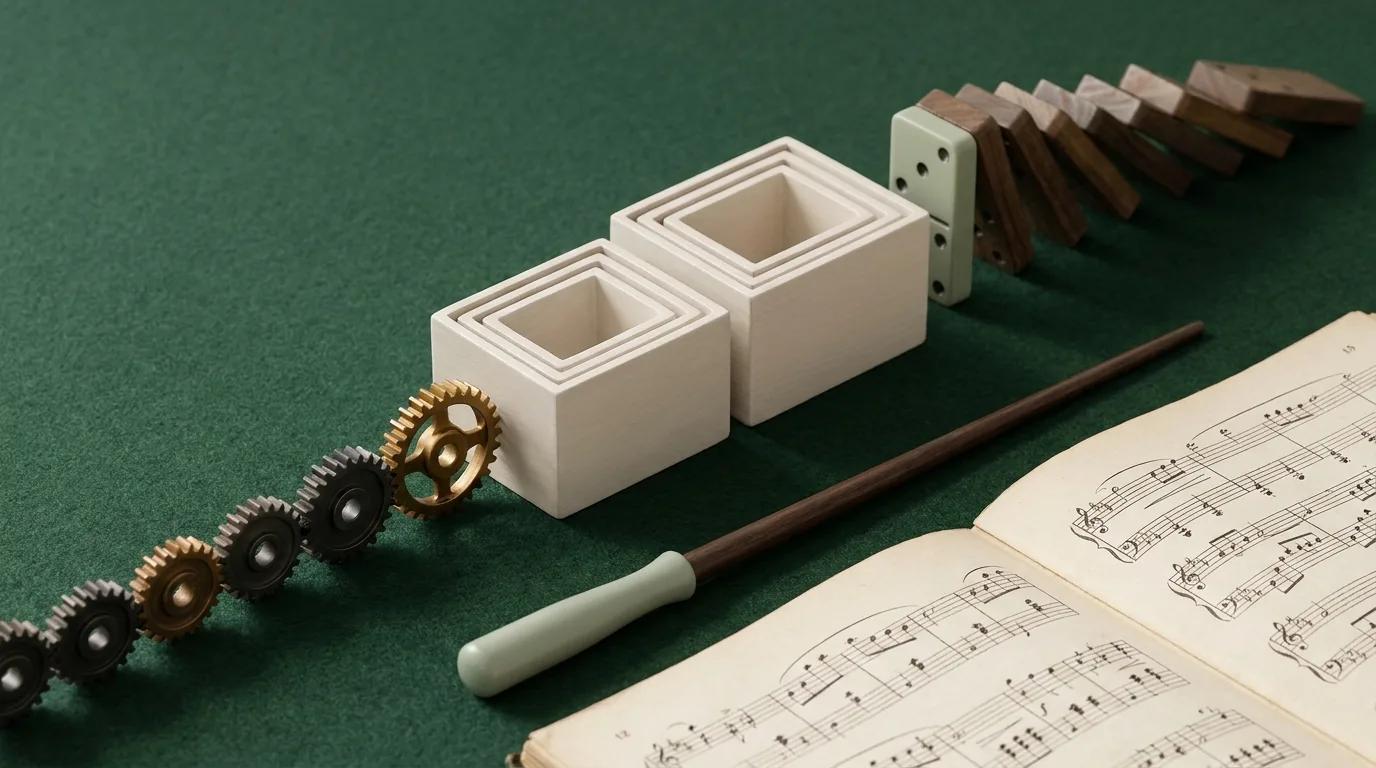

For a solo operator running a complex business across multiple clients and entities, that reset is a real operational problem. The solution we built combines Notion as the human-readable knowledge layer with BigQuery as the machine-readable operational history — a persistent memory infrastructure that means Claude never truly starts from scratch.

Here’s how the architecture works and why each layer exists.

Why Two Layers

Notion and BigQuery solve different parts of the memory problem.

Notion is optimized for human-readable, structured documents. An SOP in Notion is readable by a person and fetchable by Claude. But Notion isn’t a database in the traditional sense — it doesn’t support the kind of programmatic queries that make large-scale operational history navigable. Searching five hundred knowledge pages for a specific historical data point is slow and imprecise in Notion.

BigQuery is optimized for exactly that: large-scale structured data that needs to be queried programmatically. Operational history — every piece of content published, every session’s decisions, every architectural change — lives in BigQuery as structured records that can be queried precisely and quickly. But BigQuery records aren’t human-readable documents. They’re rows in tables, useful for lookup and retrieval but not for the kind of contextual understanding that Notion pages provide.

Together they cover the full memory requirement: Notion for what the operation knows and how things are done, BigQuery for what the operation has done and when.

The Notion Layer: Structured Knowledge

The Notion knowledge layer is the Knowledge Lab database — SOPs, architecture decisions, client references, project briefs, and session logs. Every page carries the claude_delta metadata block that makes it machine-readable: page type, status, summary, entities, dependencies, and a resume instruction.

The Claude Context Index — a master registry page listing every key knowledge page with its ID, type, status, and one-line summary — is the entry point. At the start of any session touching the knowledge base, Claude fetches the index and identifies the relevant pages for the current task. The index-then-fetch pattern keeps context loading fast and targeted.

What the Notion layer provides: the institutional knowledge of how the operation works, what has been decided, and what the constraints are for any given client or project. This is the layer that makes Claude operate consistently across sessions — not by remembering the previous session, but by reading the same underlying knowledge base that governed it.

The BigQuery Layer: Operational History

The BigQuery operations ledger is a dataset in Google Cloud that holds the operational history of the business: every content piece published with its metadata, every significant session’s decisions and outputs, every architectural change to the systems, and — most importantly — the embedded knowledge chunks that enable semantic search across the entire knowledge base.

The knowledge pages from Notion are chunked into segments and embedded using a text embedding model. Those embedded chunks live in BigQuery alongside their source page IDs and metadata. When a session needs to find relevant knowledge that isn’t covered by the Context Index, a semantic search against the embedded chunks surfaces the right pages without requiring a manual search.

What the BigQuery layer provides: operational history that’s too large and too structured for Notion pages, semantic search across the full knowledge base, and a machine-readable record of everything that has been done — which pieces of content exist, what was changed, what decisions were made and when.

How Sessions Use Both Layers

A typical session that requires deep operational context follows a pattern. Claude reads the Claude Context Index from Notion and identifies relevant knowledge pages. It fetches those pages and reads their metadata blocks. For operational history — “what has been published for this client in the last thirty days?” — it queries the BigQuery ledger directly. For knowledge gaps not covered by the index, it runs a semantic search against the embedded chunks.

The result is a session that starts with genuine institutional context rather than a blank slate. Claude knows how the operation works, what the relevant constraints are, and what has happened recently — not because it remembers the previous session, but because all of that information is accessible in structured, retrievable form.

The Maintenance Requirement

Persistent memory infrastructure requires persistent maintenance. The Notion knowledge layer stays current through the regular SOP review cycle and the practice of documenting decisions as they’re made. The BigQuery layer stays current through automated sync processes that push new content records and session logs as they’re created.

The sync isn’t fully automated in a set-and-forget sense — it requires periodic verification that records are being captured correctly and that the embedding model is processing new chunks accurately. But the maintenance overhead is modest: a few minutes of verification per week, and occasional manual intervention when a sync process fails silently.

The system degrades if the maintenance lapses. A knowledge base that’s three months stale is worse than no knowledge base — it provides false confidence that Claude has current context when it doesn’t. The maintenance discipline is as important as the architecture.

The Notion + BigQuery memory architecture is advanced infrastructure. We build and configure it for operations that are ready for it — not as a first Notion project, but as the next layer on top of a working system.

Tygart Media runs this infrastructure live. We know what the build and maintenance actually requires.

Frequently Asked Questions

Why use BigQuery instead of just storing everything in Notion?

Notion is optimized for human-readable structured documents, not for large-scale programmatic data queries. Storing thousands of operational history records — content publishing logs, session outputs, embedded knowledge chunks — in Notion creates performance problems and makes precise programmatic queries slow. BigQuery handles that scale trivially and supports the SQL queries and vector similarity searches that make the operational history actually useful. Notion and BigQuery do different things well; the architecture uses each for what it’s good at.

Is this architecture accessible to non-engineers?

The Notion layer is. The BigQuery layer requires comfort with Google Cloud infrastructure, SQL, and API integration. Building and maintaining the BigQuery ledger is an engineering task. For operators without that background, the Notion layer alone — the Knowledge Lab, the claude_delta metadata standard, the Context Index — provides significant value and is fully accessible without engineering support. The BigQuery layer is the advanced extension, not the foundation.

What does “semantic search over embedded knowledge chunks” mean in practice?

When knowledge pages are embedded, each page (or section of a page) is converted into a numerical vector that represents its meaning. Semantic search finds pages with vectors close to the query vector — pages that are conceptually similar to what you’re looking for, even if they don’t use the same words. In practice this means Claude can find relevant knowledge pages by describing what it needs rather than knowing the exact title or keyword. It’s significantly more reliable than keyword search for knowledge retrieval across a large, varied knowledge base.