TL;DR: 96% of visitors to a restoration company’s website leave without calling. Retargeting ads follow them across the web for 30-90 days at $2-12 per thousand impressions, converting cold traffic into warm leads at a fraction of Google Ads’ $150+ cost per click.

The 96% Problem

A property manager searches “water damage restoration near me” at 2 AM during an active flooding event. They click your site, scan the page, then click the back button to check two more companies. You never hear from them again.

This happens to 96% of your website visitors. They find you, evaluate you, and leave — not because you weren’t qualified, but because they were comparison shopping under duress. In restoration, the buying window is 2-4 hours during an emergency and 2-4 weeks during a planned remediation. If you’re not in front of them during that entire window, someone else is.

Retargeting solves this by placing a tracking pixel on your website that follows visitors across the internet, serving them your ads on news sites, social media, and apps for 30-90 days after their initial visit. The cost: $2-12 per thousand impressions, compared to the $129-156 per click you’d pay for new Google Ads traffic in the restoration vertical.

How Retargeting Works for Restoration

The mechanics are straightforward. A JavaScript pixel from Google Ads, Facebook, or a dedicated platform like AdRoll fires when someone visits your site. That visitor is added to an audience list. When they browse other websites in the ad network, your ad appears — your brand, your phone number, your emergency response guarantee.

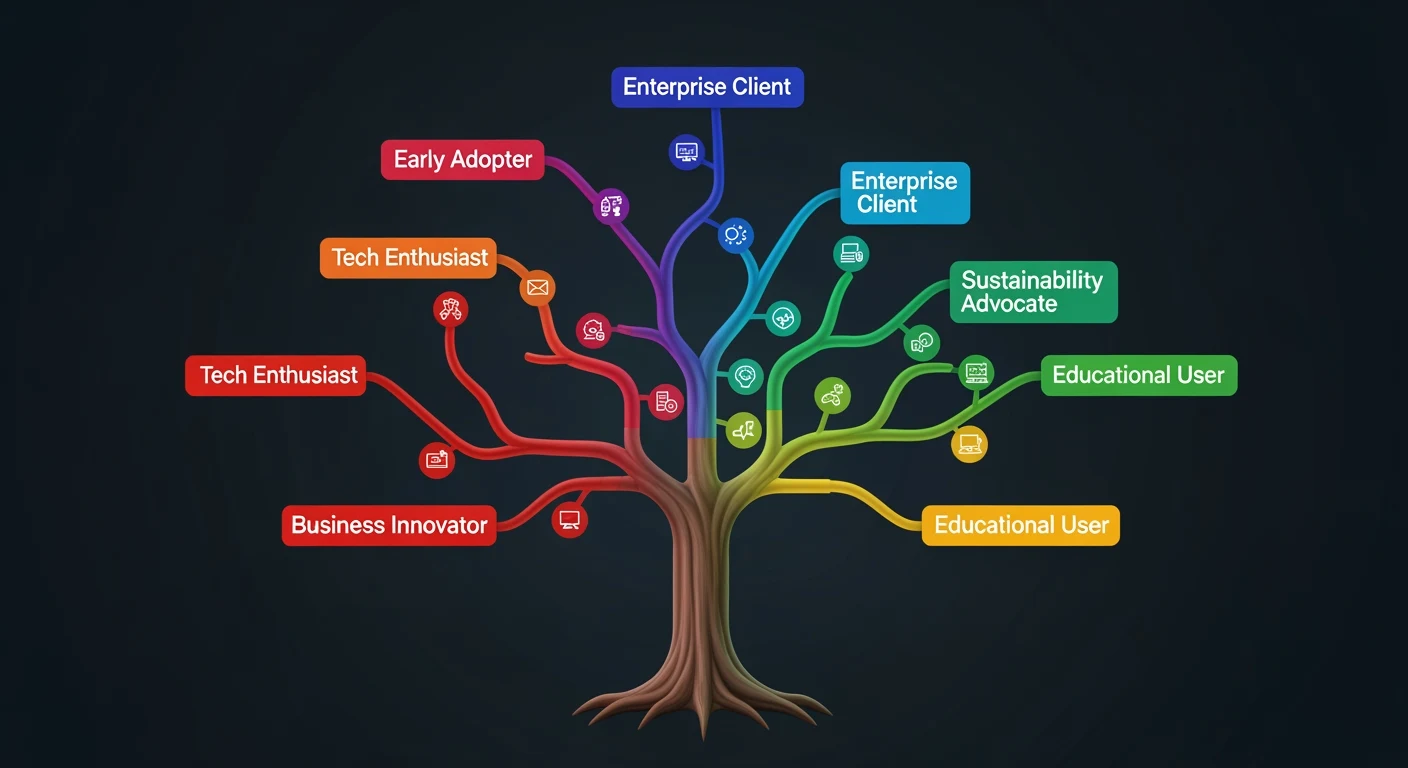

For restoration companies, the retargeting audience segments that drive the most signed contracts are emergency visitors who viewed your 24/7 response page but didn’t call, insurance claim visitors who viewed your “we work with all insurance carriers” page, and commercial property managers who viewed your commercial services page. Each segment gets different creative: the emergency segment sees “Still dealing with water damage? We respond in 60 minutes — call now.” The commercial segment sees “Trusted by 200+ property managers in [City]. Free damage assessment.”

The Math: Retargeting vs. Fresh Google Ads Traffic

Restoration is one of the most expensive verticals in Google Ads. According to our analysis of digital real estate valuations, water damage restoration keywords command CPCs of $129-156 in competitive markets. A $10,000/month Google Ads budget buys roughly 65-77 clicks.

That same $10,000 in retargeting buys 830,000 to 5,000,000 impressions — repeated exposure to people who already know your brand. The conversion rate on retargeted traffic runs 2-4x higher than cold search traffic because the visitor has already evaluated your site once.

The optimal strategy isn’t either/or. It’s using Google Ads as a high-density discovery engine to drive initial qualified traffic, then using retargeting to stay in front of the 96% who don’t convert immediately.

Platform Selection for Restoration

Google Display Network retargeting reaches the broadest audience — news sites, weather apps, recipe blogs, sports sites. For restoration, this is the primary channel because property managers and homeowners browse broadly during the decision period.

Facebook/Instagram retargeting is particularly effective for residential restoration because homeowners scroll social media during evenings and weekends — exactly when they’re processing insurance claims and evaluating contractors.

LinkedIn retargeting targets commercial property managers and facilities directors. If your restoration company does significant commercial work, LinkedIn retargeting to visitors of your commercial services pages delivers disproportionate ROI because the average commercial contract value is 5-10x residential.

The 90-Day Drip Sequence

Effective restoration retargeting isn’t showing the same ad for 90 days. It’s a sequenced campaign that mirrors the decision timeline.

Days 1-7 (Urgency phase): “Still need emergency restoration? We respond in 60 minutes, 24/7. Call [phone].” This catches the comparison shoppers who visited during an active emergency.

Days 8-30 (Trust phase): Rotate testimonials, before/after project photos, and certifications. “IICRC Certified. 500+ projects completed. See our work.” This builds credibility during the evaluation phase.

Days 31-90 (Nurture phase): Educational content — “5 Signs of Hidden Water Damage,” “What Your Insurance Company Won’t Tell You About Mold Claims.” This positions your company as the expert for future incidents and referrals.

What Most Restoration Companies Get Wrong

The most common mistake is running retargeting with the same generic ad to everyone forever. The second most common mistake is not excluding converters — continuing to serve ads to people who already called and signed a contract. The third is setting the frequency cap too high, showing the same ad 20+ times per day until the prospect actively resents your brand.

Set frequency caps at 3-5 impressions per day, exclude converted leads from your audience immediately, and rotate creative every 2 weeks. The goal is persistent presence, not harassment.

Retargeting won’t replace your core digital strategy or your content engine. But it will capture the massive revenue you’re currently leaking every time a qualified visitor bounces without converting. At $2-12 CPM, it’s the cheapest insurance policy in your marketing budget.

{

“@context”: “https://schema.org”,

“@type”: “Article”,

“headline”: “Retargeting for Restoration Companies: The $12 Strategy That Turns Website Visitors Into Signed Contracts”,

“description”: “96% of restoration website visitors leave without calling. Retargeting ads follow them for 30-90 days at $2-12 CPM — a fraction of the $150/click Google Ads cos”,

“datePublished”: “2026-03-30”,

“dateModified”: “2026-04-03”,

“author”: {

“@type”: “Person”,

“name”: “Will Tygart”,

“url”: “https://tygartmedia.com/about”

},

“publisher”: {

“@type”: “Organization”,

“name”: “Tygart Media”,

“url”: “https://tygartmedia.com”,

“logo”: {

“@type”: “ImageObject”,

“url”: “https://tygartmedia.com/wp-content/uploads/tygart-media-logo.png”

}

},

“mainEntityOfPage”: {

“@type”: “WebPage”,

“@id”: “https://tygartmedia.com/retargeting-for-restoration-companies-the-12-strategy-that-turns-website-visitors-into-signed-contracts/”

}

}