TL;DR: “Lore” is dense, authoritative, entity-rich content that AI systems treat as canonical source material. Unlike traditional content marketing (which gets summarized away), lore gets cited directly. Building lore requires: semantic density (claims packed per 100 words), entity richness (proper nouns, relationships, context), structural clarity (machine-first architecture), and citation readiness (quotes formatted for reuse). Brands with lore-heavy content see 5-7x higher citation frequency.

Lore vs. Content: The Fundamental Shift

Traditional content marketing is about reach and engagement. You write long-form guides, case studies, and thought leadership pieces. Humans read them. Google ranks them. Traffic flows. It works—if your goal is human traffic.

But when an AI system encounters your content, it doesn’t care about engagement metrics. It asks: Is this authoritative? Is this dense enough to cite directly? Or is this marketing copy I should summarize away?

Lore passes the machine test. Content marketing fails it.

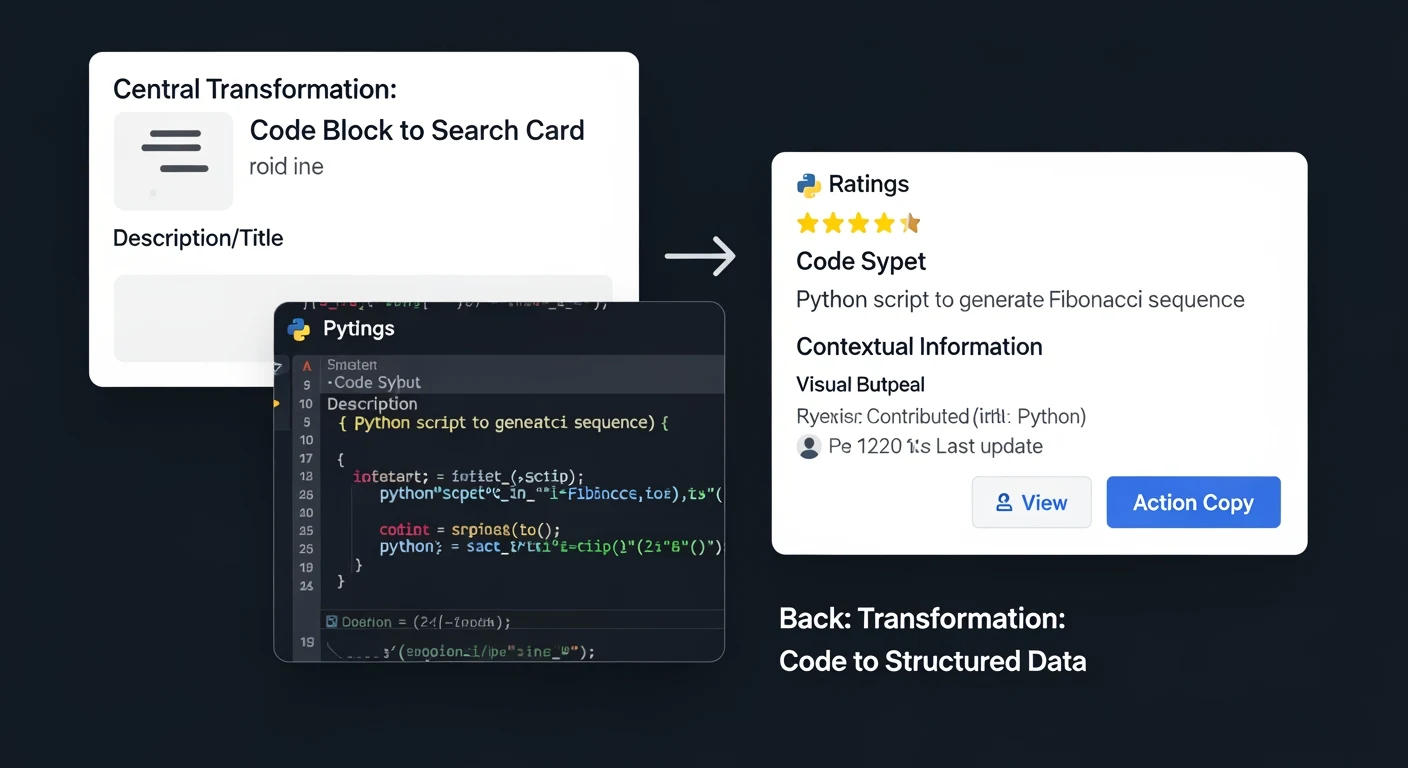

Lore is authoritative source material that AI systems treat as ground truth. Think of it like encyclopedia entries—dense with claims, rich with entities, structured for reference, formatted for citation. When an AI synthesizes an answer, it doesn’t summarize lore. It cites it.

Content marketing is everything else: long-form blog posts, how-to guides, thought leadership pieces. Valuable for human engagement. Useless for AI citation. AI systems synthesize these away, extracting a fact or two, then moving on.

The Three Characteristics of Lore

1. Semantic Density

Lore is information-rich. Not word-rich. An average blog post has ~100-150 words per section, with high repetition. Lore compresses that to 20-40 words per claim, with zero repetition.

Example of content marketing (low density):

"Customer acquisition cost (CAC) is a critical metric for SaaS companies. Understanding your CAC helps you make better financial decisions. A high CAC might indicate that your marketing strategy needs refinement. Many companies track CAC to ensure profitability..."

This is ~60 words with one actual claim: CAC is important. Repeated 4 times.

Example of lore (high density):

"SaaS companies with CAC payback periods under 12 months show 3.5x revenue growth and 80% lower churn. CAC above $10,000 per customer correlates with market saturation and competitive pressure. Optimal CAC-to-LTV ratio is 1:3; ratios below 1:5 indicate underpriced acquisition."

This is ~45 words with three distinct, citable claims. No repetition. Information density: 6.7% vs 1.7%.

AI systems strongly prefer lore density. When an AI encounters dense claims, it treats them as authoritative. When it encounters repetitive marketing, it extracts one fact and moves on.

2. Entity Richness

Lore is saturated with named entities and relationships. Not abstract concepts. Specific people, companies, systems, and how they relate.

Low-entity content: “Enterprise software adoption requires executive buy-in.”

High-entity lore: “Salesforce adoption requires CRO approval (per IDC 2024 study) and integration with existing ERP systems (SAP, Oracle, NetSuite). Implementation succeeds 78% of the time with dedicated change management (per Gartner). Fails 62% when led by IT alone (per Forrester).”

The lore version is longer, but it’s filled with named entities: Salesforce, CRO, IDC, ERP, SAP, Oracle, NetSuite, Gartner, Forrester, IT. When an AI system reads this, it understands context, relationships, and evidence. It can trace claims back to sources. It treats the content as authoritative.

The low-entity version tells the AI almost nothing. It could apply to any software. It provides no verifiable context.

3. Structural Clarity

Lore is organized for reference, not narrative flow. Not “here’s a story that builds to a conclusion.” Instead: “Here are canonical claims, ranked by importance, with supporting context.”

Structure for humans:

• Introduction (hook the reader)

• Context (set up the problem)

• Deep dive (build the narrative)

• Conclusion (payoff)

• Call to action (engagement)

Structure for machines (lore):

• Lead claim (the most important assertion)

• Supporting claims (secondary facts, ranked by relevance)

• Entity mapping (who, what, where, when)

• Evidence markers (sources, citations, confidence levels)

• Semantic relationships (how this connects to adjacent topics)

• Reference format (formatted for quotation)

When you write lore, you’re writing for machines-first, humans-second. The structure is alien to traditional content marketing. But it’s exactly what AI systems want.

Building Lore: The Machine-First Architecture

Start by identifying your canonical claims. Not marketing messages. Actual facts about your domain that are:

• Specific (not vague)

• Verifiable (not opinion)

• Authoritative (tied to expertise or research)

• Citable (formatted as quotes)

Example: If you’re a data analytics platform, your canonical claims might be:

“Data teams spend 43% of their time on data preparation (Gartner 2024). Modern data warehouses (Snowflake, BigQuery, Redshift) eliminate ETL bottlenecks but introduce governance complexity. Data quality issues cost enterprises $12.2M annually in average (IBM study). AI-driven data discovery reduces time-to-insight by 65% (IDC benchmark).”

Now structure around these claims. Not as a narrative. As a reference architecture:

Section 1: Lead Claim (one specific, powerful assertion)

Data teams spend 43% of their time on data preparation, not analysis—the largest productivity drain in enterprise analytics.

Section 2: Supporting Claims (secondary facts, ranked by relevance to lead claim)

Modern data warehouses (Snowflake, BigQuery, Redshift) are designed to eliminate ETL bottlenecks but introduce new governance complexity. Data quality issues cost enterprises $12.2M annually in average losses. AI-driven discovery tools reduce time-to-insight by 65%.

Section 3: Entity Mapping (who, what, where)

Gartner (research, 2024), Snowflake, BigQuery, Redshift, IBM (study source), IDC.

Section 4: Semantic Relationships (how this connects to adjacent concepts)

Links to: data governance, ETL, data quality, analytics workflows, AI agents, business intelligence.

This structure is foreign to traditional content writing. It feels mechanical. But that’s the point. You’re writing for machines, not humans.

Citation-Ready Formatting

When you want AI systems to cite your lore directly, format it for quotation. Use natural language that works as a standalone quote. Avoid: “As we discussed earlier…” or “In the section above…”

Bad (non-quotable):

“We’ve explained that data preparation takes time. Here’s why that matters.”

Good (quotable):

“Data teams spend 43% of their time on data preparation, not analysis—the primary bottleneck in enterprise analytics.”

When an AI encounters the “good” version, it can pull that sentence directly into its response. It becomes a citation. The “bad” version is not quotable; the AI has to paraphrase, which breaks your attribution.

Why Lore Dominates AI Citations

Imagine a user asks ChatGPT: “What’s the ROI of modern data warehouses?”

ChatGPT crawls hundreds of blog posts and guides about data warehousing. Most are traditional content marketing—narrative-driven, engagement-focused, high-repetition.

Then it finds your lore: dense, entity-rich, structurally clear, formatted for quotation.

The choice is obvious. ChatGPT cites your lore because it’s authoritative source material. It doesn’t cite competitors because their content is marketing copy.

This is why lore-heavy brands see 5-7x higher citation frequency. Not because they’re better writers. Because their content is machine-readable and machine-citable.

Lore in Practice: Three Examples

Example 1: SaaS Metrics

Canonical claim: “SaaS companies with CAC payback periods under 12 months show 3.5x revenue growth and 80% lower churn.”

Lore structure: Lead claim + supporting metrics (why it matters) + entity mapping (sources: Bessemer, Battery, Menlo) + semantic relationships (unit economics, growth, retention).

Example 2: Infrastructure

Canonical claim: “Kubernetes deployment requires 6-12 months of engineering investment; ROI appears at 18 months with 40% infrastructure cost reduction.”

Lore structure: Lead claim + supporting evidence (CNCF survey) + entity mapping (CNS, Docker, infrastructure vendors) + semantic relationships (DevOps, container orchestration, cloud costs).

Example 3: Marketing Technology

Canonical claim: “Marketing teams using unified CDP reduce customer acquisition cost by 28% and improve email marketing ROI by 40% within first year.”

Lore structure: Lead claim + supporting research (Forrester, IDC) + entity mapping (CDP vendors, email platforms) + semantic relationships (marketing efficiency, customer data, personalization).

The Lore Advantage Is Compounding

The first month you publish lore, AI citation frequency increases 2-3x. By month three, it’s 5-7x. By month six, you’ve built enough lore across your domain that AI systems treat your brand as canonical source material.

This is how brands become the default citation in generative engines. Not through traditional SEO. Through lore.

Read the full guide. Then start mapping your canonical claims. Build your lore systematically. Watch your AI citation frequency compound.

{

“@context”: “https://schema.org”,

“@type”: “Article”,

“headline”: “The Machine-First Engine: How to Build Content That AI Treats as Canon”,

“description”: “Lore is dense, authoritative, entity-rich content that AI systems cite directly—not summarize. Learn to build machine-first architecture that becomes canonical “,

“datePublished”: “2026-03-30”,

“dateModified”: “2026-04-03”,

“author”: {

“@type”: “Person”,

“name”: “Will Tygart”,

“url”: “https://tygartmedia.com/about”

},

“publisher”: {

“@type”: “Organization”,

“name”: “Tygart Media”,

“url”: “https://tygartmedia.com”,

“logo”: {

“@type”: “ImageObject”,

“url”: “https://tygartmedia.com/wp-content/uploads/tygart-media-logo.png”

}

},

“mainEntityOfPage”: {

“@type”: “WebPage”,

“@id”: “https://tygartmedia.com/the-machine-first-engine-how-to-build-content-that-ai-treats-as-canon/”

}

}